Native Google Kubernetes Engine (GKE) support for Chainloop

Miguel Martinez

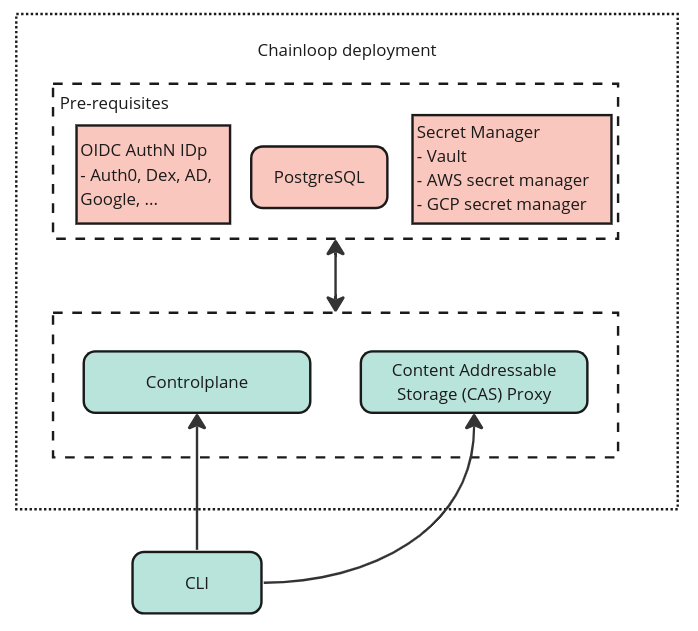

Deployment overview

Chainloop is an Open Source, Cloud native application that can be deployed on any Kubernetes Cluster using this Helm Chart.

Its architecture is fairly simple. There are three core components (highlighted green): two services and one CLI. These have external dependencies, such as a relational database, an Identity Provider, and a secure secret storage backend.

When deploying this stack to a cloud provider, it’s common practice to leverage some of their managed services. For example, if you deploy Chainloop to Elastic Kubernetes Service (EKS) on AWS, you might opt in for an RDS PostgreSQL database or AWS secret manager. The same applies to Google Cloud Platform or Azure.

This level of pluggability has been present from the beginning, but a new set of contributions in our latest release has taken this vendor integration to the next level.

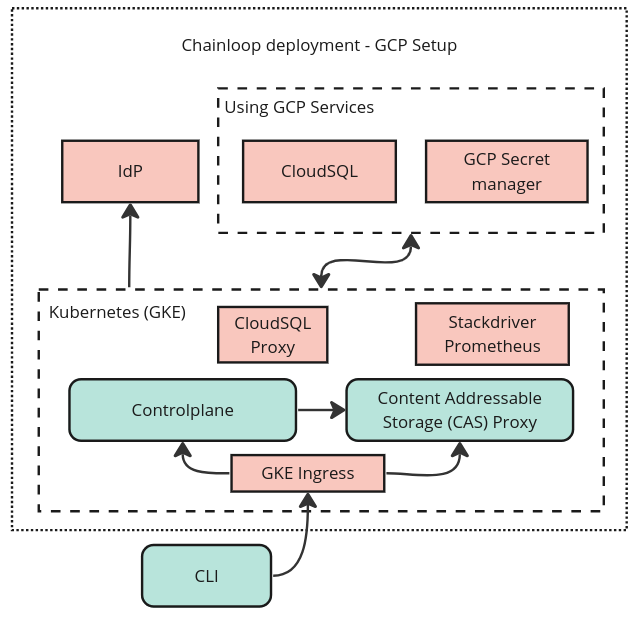

Native Google Kubernetes Engine (GKE) setup

Now, setting up Chainloop in Google Cloud can not only leverage managed services (such as cloudSQL) but also native primitives in GKE itself. This enables best practices, reduces moving parts, and improves production readiness in general:

- Instead of connecting to the CloudSQL database directly from your application, you can now use Cloud SQL Auth Proxy, which is the recommended setup.

- Our cloud-agnostic setup recommends the use of NGINX-ingress controller as a reverse proxy. Now you can also leverage GKEs managed ingress natively.

- Because we all love to have our nice metrics and charts, support for GKE-managed Prometheus has been added.

- Pod-level TLS termination has been added, both a requirement to use GKE ingress but also a step up in production readiness.

We’re excited to witness external contributors encountering initial

deployment challenges and limitations (yes, really :). Even more

importantly, we’re thrilled to see them actively contribute

improvements. Deploying Chainloop to production is now better than ever!

Don’t forget to give our latest release a try and provide any feedback, always welcome! :)

Cheers, Miguel